For some reason, existing 2D analysis softwares for sport do not seem to have provisions to correct or take into account lens distortion¹.

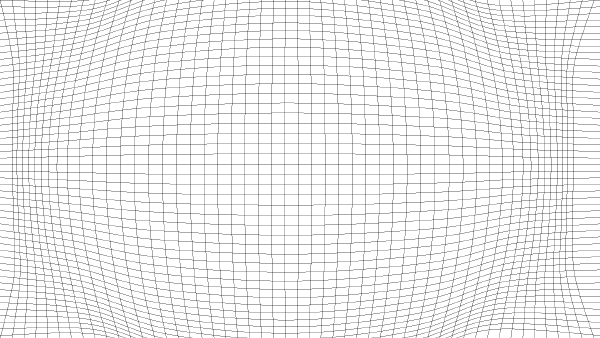

This is unfortunate because the 2D measurements assume that all points are coplanar, and lens distortion is bending that plane.

Stating the obvious:

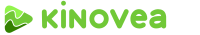

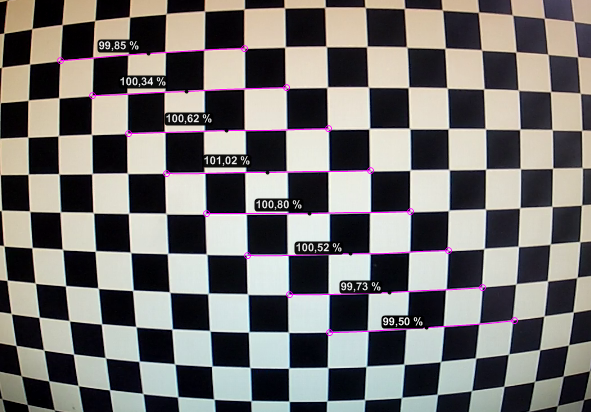

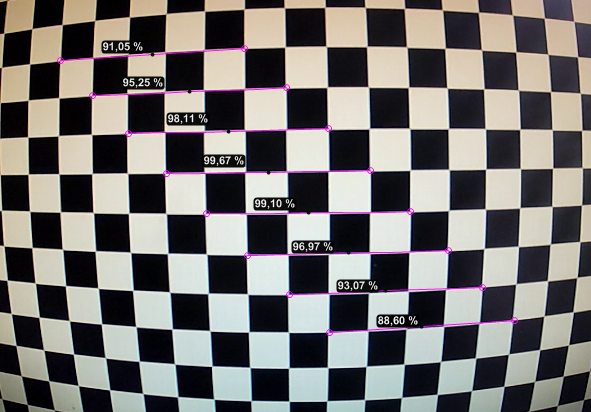

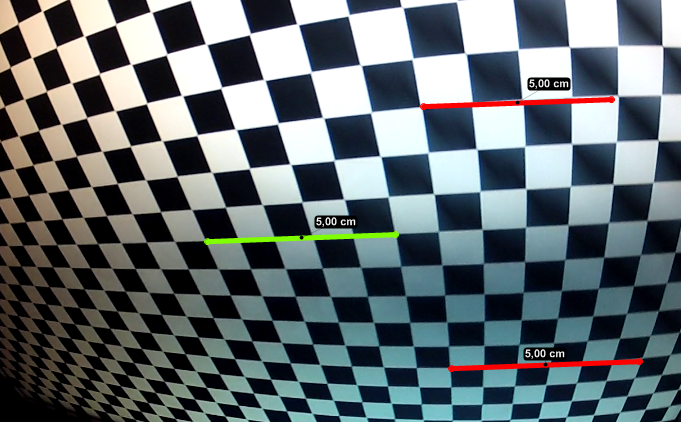

Fig 1. Ridiculous measurements on a checkerboard pattern displayed on a flat LCD screen, filmed with a 170° lens (GoPro Hero 2 at 1080p).

The green line was used for calibration and has a length of 5 squares. The red lines have the same pixel length as the green line so without any other form of correction they also display the same physical length, which is obviously wrong.

The wide angle lens primarily exposes radial distortion, no amount of plane alignment or perspective plane calibration is going to fix this.

The current suggested approach is to first undistort the video in an external software and then use the undistorted video for your 2D analysis.

The problems with this approach are that:

You need to do it for every input video,

It may degrade the quality of the footage as it implies a re-encoding,

The software used may be more oriented towards "pleasing to the eye" results rather than mathematical rigor and may use a simplified distortion model.

It's not just fisheye lenses. Almost all cameras have some form of distortion even though it's not always readily noticeable.

Here is the plan to integrate lens distortion into Kinovea.

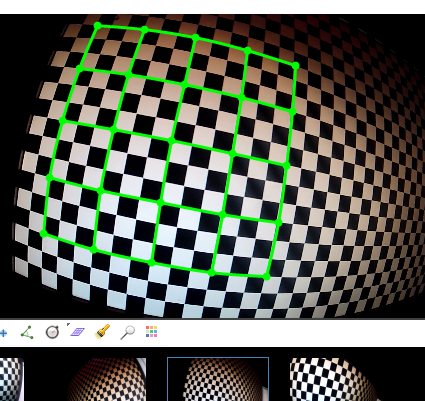

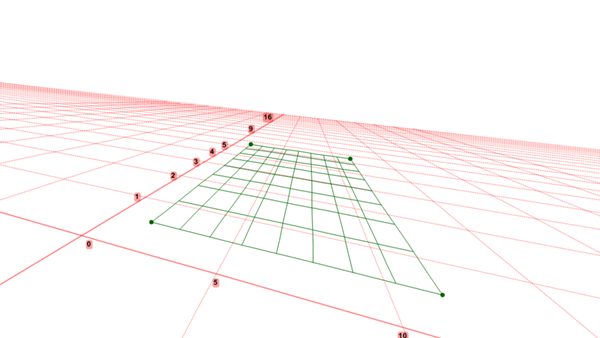

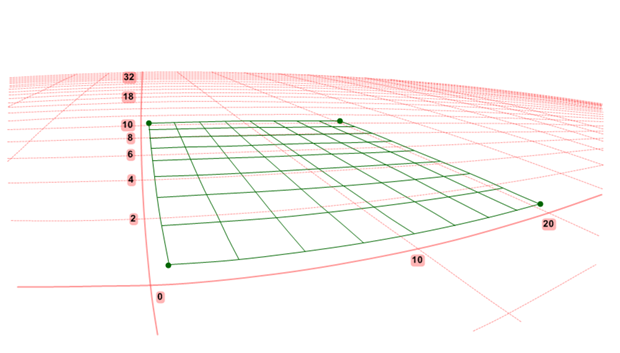

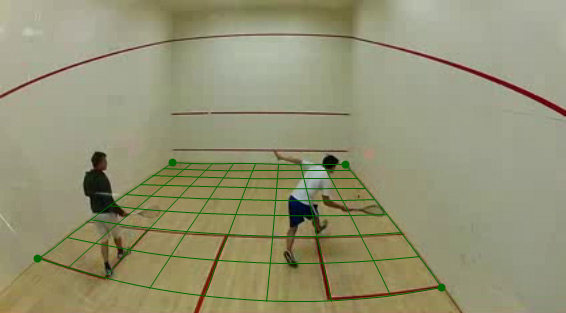

A new drawing tool "distortion grid" allowing the user to place a grid on top of a checkerboard like pattern.

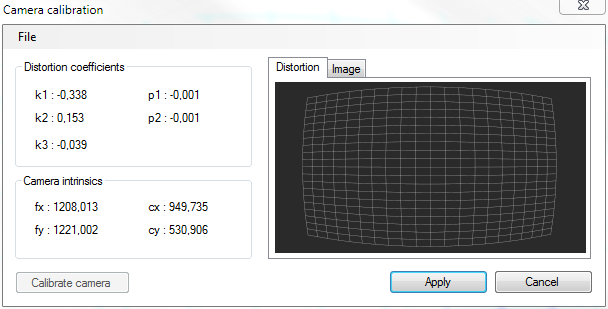

A module to compute lens distortion parameters based on the distortion grids you added throughout the video.

A module to save and load distortion parameters as "profiles", for reuse with all videos from that specific camera.

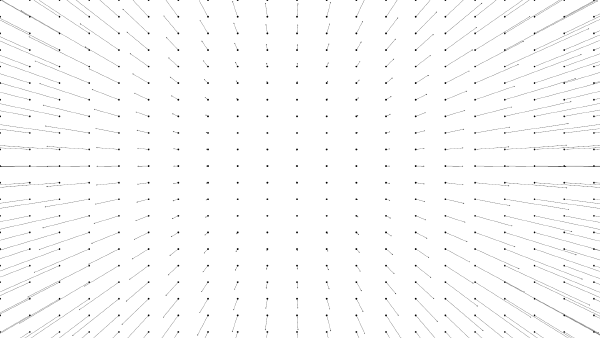

Usage of the distortion parameters to compute undistorted coordinates and usage of these coordinates to make all measurements in Kinovea.

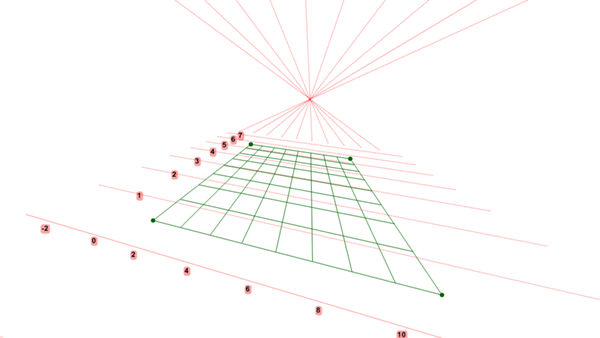

Usage of the distortion parameters to redistort straight lines into curves for displaying purposes. Interesting for lines, angles, rectangular grids, perspective plane and coordinate systems.

[Nice-to-have] Import of calibration files from third party photogrammetry software.

Note that it is not planed to undistort the images themselves in real time. Rather, I would like to keep the video untouched, but use the distortion parameters for measurements and display.

I will use this topic to post updates on the progress of this feature. It may take a while since there are plenty of other topics that are in progress, completion of the 0.8.22, bug fixing, etc. Your feedback is nonetheless highly appreciated.

----

¹: The popular commercial packages for 2D analysis (Dartfish, Quintic) do not seem to have any lens distortion related features. If you know otherwise, please post a comment.

The Centre for Sports Engineering Research at Sheffield Hallam University (UK) has developed a software called Check2D, it is the first effort to my knowledge to address the issue in the context of sport study. Background and case study (PDF).

Softwares rebuilding 3D coordinates from multiple cameras are immune to lens distortion.