Experimental version. As always feedback is very appreciated!

Beware of regressions and report anything suspicious. Do not assume the issue is known.

----

Special thanks to Milan Djupovac and the folks at Sport Diagnostic Center Šabac (Serbia) for the ongoing testing and support of this version.

And many thanks to the Jumpers Rebound Center in Gillingham (UK) who donated a Microsoft LifeCam Studio for testing purposes.

And also many thanks to DVC Machinevision BV (Netherlands) for the super deal on the Basler camera many months back.

----

This release is almost entirely about cameras and capture.

Improved capture performances and camera configurability

Support for Basler cameras.

Capture history.

Other goodies.

1. Improved capture performances and camera configurability

This has taken the most part of the multi-month effort of this release. I also acquired 7 various USB cameras in the process and was donated one

Parts of the low level capture architecture were rewritten from scratch and gave birth to a new capture "pipeline" with a more direct path from the camera to the disk and an improved multi-threading model.

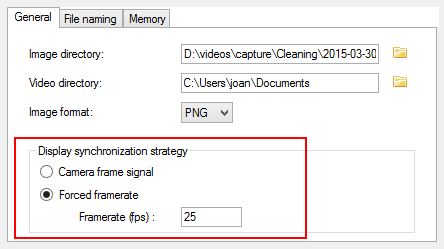

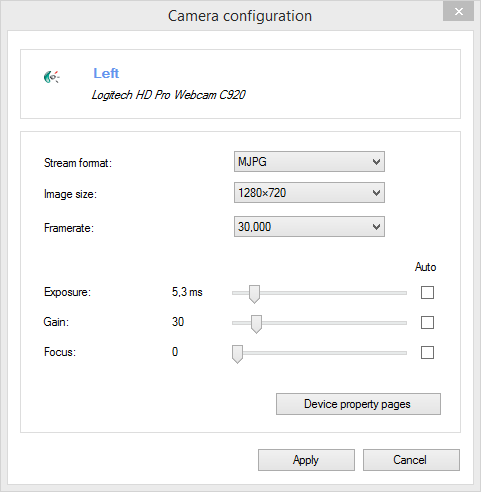

The camera configuration dialog is now more detailed, and lets you choose the precise stream format and framerate, configure exposure duration, gain and focus, when the camera supports it.

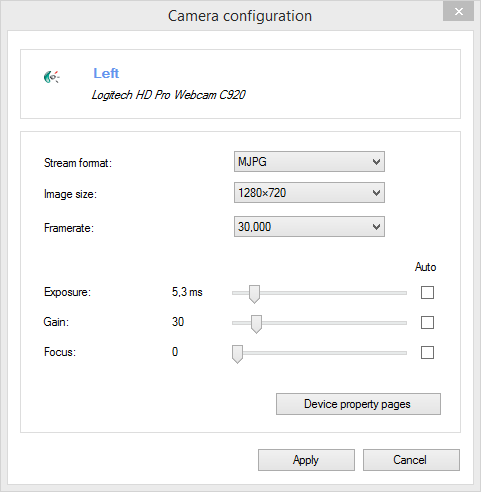

The maximum performance will be reached when using the MJPEG stream format with cameras that have on-board compression, as the stream will be directly pushed to the capture file without any transcoding. This should enable the capture of two full HD streams without frame drops.

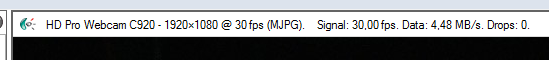

On the top of the camera screen, the status bar contains new information:

Signal : the actual frame rate received from the camera. May or may not match the configuration.

Data : the bandwidth between the camera and Kinovea.

Drops : the number of frame drops during the current or last recording session.

The new output file format (whichever option is selected as stream input) is MJPEG inside MP4 container (not configurable).

For the live image, another change is the "Display synchronization strategy", to decouple the preview framerate from the captured framerate. I did not find a concise sentence to quickly convey all the implications of this setting, so I'll attempt to describe it in this topic.

2. Support for Basler cameras

This version introduced preliminary support for Basler high end industrial cameras, though their Pylon SDK.

I was only able to test it using a black and white camera so if you have access to a color camera please report how it works for you.

Live view, configuration and recording should all be supported.

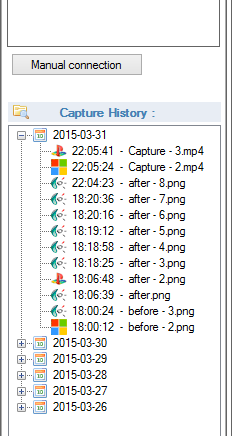

3. Capture history

A little feature that was added almost at the last minute, but I think it could prove quite useful.

Basically each time you make a recording, an entry is saved in the history panel, and from there you can launch the videos.

Note that you can import your current capture directory (or any other directory for that matter) into the history using the button on the left. This can also be useful when you recorded a session on the camera and later dumped the SD card on the main computer.

After some threshold the days are grouped into months.

4. Other goodies

- A new tool "Test grid" under menu Image, for cameras. This can be used to assert that the camera is level, locate the center of the image, etc.

- A new timecode "total microseconds" and the ability to select up to one million fps in the high speed camera dialog. For those users that have really high speed cameras.

A number of defects were fixed and even more things were crammed in, please check the raw changelog.

Enjoy!